With a GPU, memory continues to grow without releasing · Issue #8236 · eclipse/deeplearning4j · GitHub

With a GPU, memory continues to grow without releasing · Issue #8236 · eclipse/deeplearning4j · GitHub

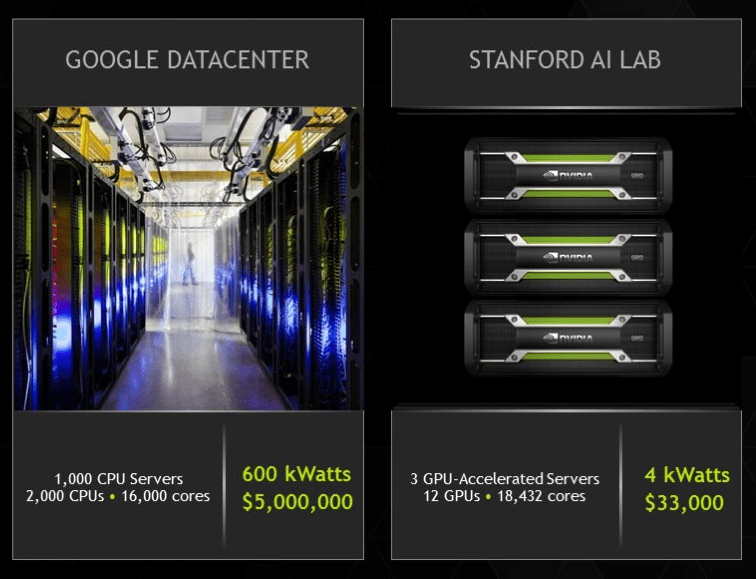

Deep Learning, GPUs, and NVIDIA: A Brief Overview - The NVIDIA Titan V Deep Learning Deep Dive: It's All About The Tensor Cores

Possible to force CPU / GPU nd4j backend for model train? · Issue #7215 · eclipse/deeplearning4j · GitHub

Using a trained model on GPU or CPU backend results in different outputs · Issue #4688 · eclipse/deeplearning4j · GitHub